What?

We’re excited to announce that Preevy can now deploy your preview environments to any Kubernetes cluster! Kubernetes support is a major improvement to Preevy, and a big step in our journey to include and empower a broader community of developers.

Why?

Kubernetes-powered ephemeral preview environments are faster, scalable and cost-effective alternative to VMs.

While preview environments live for the duration of a Pull Request, they will typically only see little bursts of actual usage. Kubernetes is a great way to oversubsrcibe compute resources. Environments can be configured to require little CPU and memory while idle and waiting for a review.

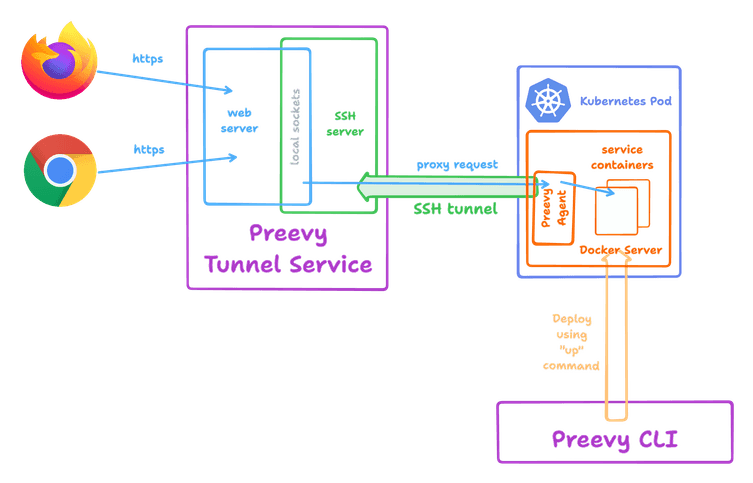

With Kubernetes, there’s no need to provision VMs. Instead, a single Pod is provisioned for every environment. This is about as fast as starting a Docker-in-Docker container.

How?

Preevy creates ephemeral preview environments by deploying your Docker Compose project on a server, and exposing it to the internet (or your private network) via a proxy service. Before Kubernetes support, Preevy needed to provision a VM with a Docker server for each environment. With Kubernetes, it provisions a Docker server as a Kubernetes Pod and runs your project inside it. Your services are still exposed using the Preevy Tunnel Service.

To start using Preevy with Kubernetes today, follow our Getting Started guide and see the Kubernetes Driver docs.

Preevy’s driver contract

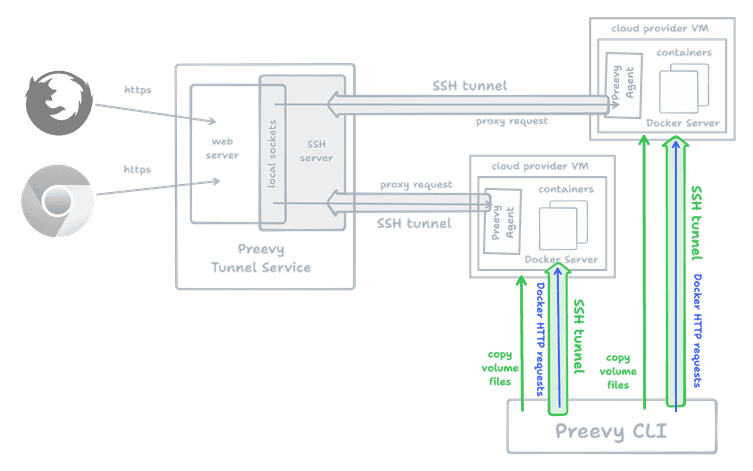

Drivers are Preevy’s way to offer an extensible framework for deploying environments on a variety of providers. The first driver we released was the AWS Lightsail driver, which deploys your environment on a budget-friendly VM on Amazon’s cloud. Since then, we’ve added support for Google Cloud and Microsoft Azure.

Common to all the VM drivers is the ability to connect to the provisioned machines using SSH.

Once a VM exists, SSH is used to copy any volume files, and finally to establish a port-forward session to the remote Docker Server in order to run the docker compose up command.

So it was only natural to have a SSH-based contract: A createMachine function which creates a VM (installing Docker if needed), and returns the machine’s public IP, an SSH keypair and a username.

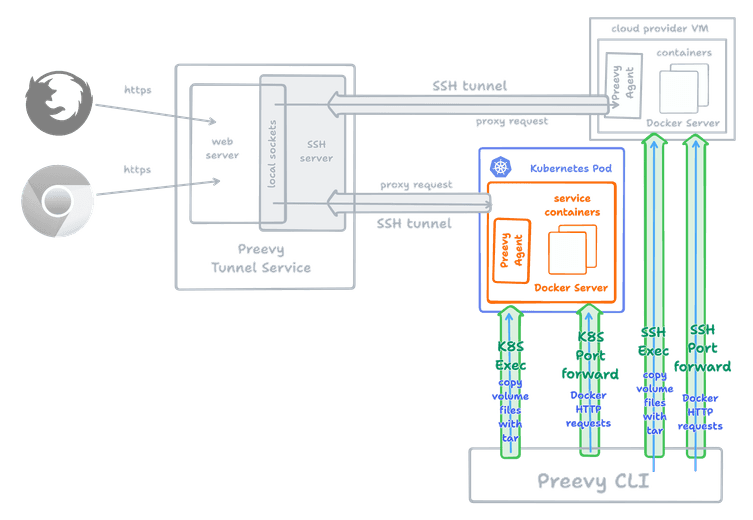

SSH makes less sense when deploying to Kubernetes - while it is possible to SSH into a Pod, it requires setting up an ingress and exposing the SSH port publicly, which is impracticle for most installations. Instead, we had to redefine the driver’s contract to create a higher-level abstraction: createMachine now returns a “connection” object which can do the following:

- Execute a command - used to copy files (by running

tarwith a piped STDIN) - Port-forward to the Docker Server

In the Kubernetes driver, the “connection” functionality is implemented using the Kubernetes exec and port-forward APIs.

Customizing the Kubernetes resources

The Kubernetes driver introduces templates, a new mechanism to customize the deployed resources. For example, Kubernetes admins might want to tag the deployed resources using labels and annotations, maybe to provision them on a specific set of worker nodes. Or, some users will want to provision additional resources per environment, like a database.

To use a custom template, configure it in the preevy init command, or specify the --kube-pod-template argument to the preevy up command.

Here is an example template:

apiVersion: v1

kind: ConfigMap

metadata:

name: {{ id }}-dc

namespace: {{ namespace }}

data:

daemon.json: |

{

"tls": false

}

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: {{ id }}

namespace: {{ namespace }}

labels:

app: preevy-{{ id }}

app.kubernetes.io/component: docker-host

annotations:

io.kubernetes.cri-o.userns-mode: "auto:size=65536"

spec:

runtimeClassName: sysbox-runc

selector:

matchLabels:

app: preevy-{{ id }}

template:

metadata:

labels:

app: preevy-{{ id }}

spec:

containers:

- name: docker

image: nestybox/alpine-docker

command: ["dockerd", "--host=tcp://0.0.0.0:2375", "--host=unix:///var/run/docker.sock"]

volumeMounts:

- mountPath: /etc/docker

name: docker-config

volumes:

- name: docker-config

configMap:

name: {{ id }}-dcThis template specifies a custom docker image nestybox/alpine-docker, a custom runtimeClassName and some annotations to allow running a rootless, non-provisioned Docker Server environment using Sysbox.

Here is a another template which deploys a test Kafka broker, Zookeeper-free, with every environment:

apiVersion: v1

kind: ConfigMap

metadata:

name: {{ id }}-dc

namespace: {{ namespace }}

data:

daemon.json: |

{

"tls": false

}

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: {{ id }}

namespace: {{ namespace }}

labels:

app: preevy-{{ id }}

app.kubernetes.io/component: docker-host

spec:

replicas: 1

selector:

matchLabels:

app: preevy-{{ id }}

template:

metadata:

labels:

app: preevy-{{ id }}

spec:

containers:

- name: docker

image: docker:24-dind

securityContext:

privileged: true

command: ["dockerd", "--host=tcp://0.0.0.0:2375", "--host=unix:///var/run/docker.sock"]

volumeMounts:

- mountPath: /etc/docker

name: docker-config

env:

- name: KAFKA_SERVICE

value: {{ id }}-kafka

volumes:

- name: docker-config

configMap:

name: {{ id }}-dc

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: kafka

namespace: {{ namespace }}

spec:

replicas: 1

selector:

matchLabels:

app: {{ id }}-kafka

template:

metadata:

labels:

app: {{ id }}-kafka

spec:

enableServiceLinks: false

containers:

- name: kafka-broker

image: ghcr.io/livecycle/kafka-docker:lc-1.1.0

ports:

- containerPort: 9092

name: kafka

protocol: TCP

env:

- name: KAFKA_WITHOUT_ZOOKEEPER

value: 'true'

- name: KAFKA_ADVERTISED_LISTENERS

value: PLAINTEXT://kafka:9092

- name: KAFKA_LISTENERS

value: PLAINTEXT://0.0.0.0:9092,CONTROLLER://0.0.0.0:9093

- name: KAFKA_CONTROLLER_QUORUM_VOTERS

value: 1\@localhost:9093

- name: CLUSTER_UUID

value: p8fFEbKGQ22B6M_Da_vCBw

readinessProbe:

failureThreshold: 10

tcpSocket:

port: 9092

periodSeconds: 3

successThreshold: 1

timeoutSeconds: 1

---

apiVersion: v1

kind: Service

metadata:

name: {{ id }}-kafka

namespace: {{ namespace }}

spec:

ports:

- port: 9092

protocol: TCP

targetPort: 9092

selector:

app: {{ id }}-kafka

type: ClusterIPThis adds another Deployment and Service to the default template.

Note the use of the environment variable KAFKA_SERVICE to make the Kubernetes Kafka service hostname (suffixed with the unique environment ID) available to the environment at build time.

Summary

With Preevy and Kubernetes working together, you can now provision lightweight environments with less effort, reduced costs and tighter integration with your existing infrastructure. Upgrade to the latest version and give it a try!